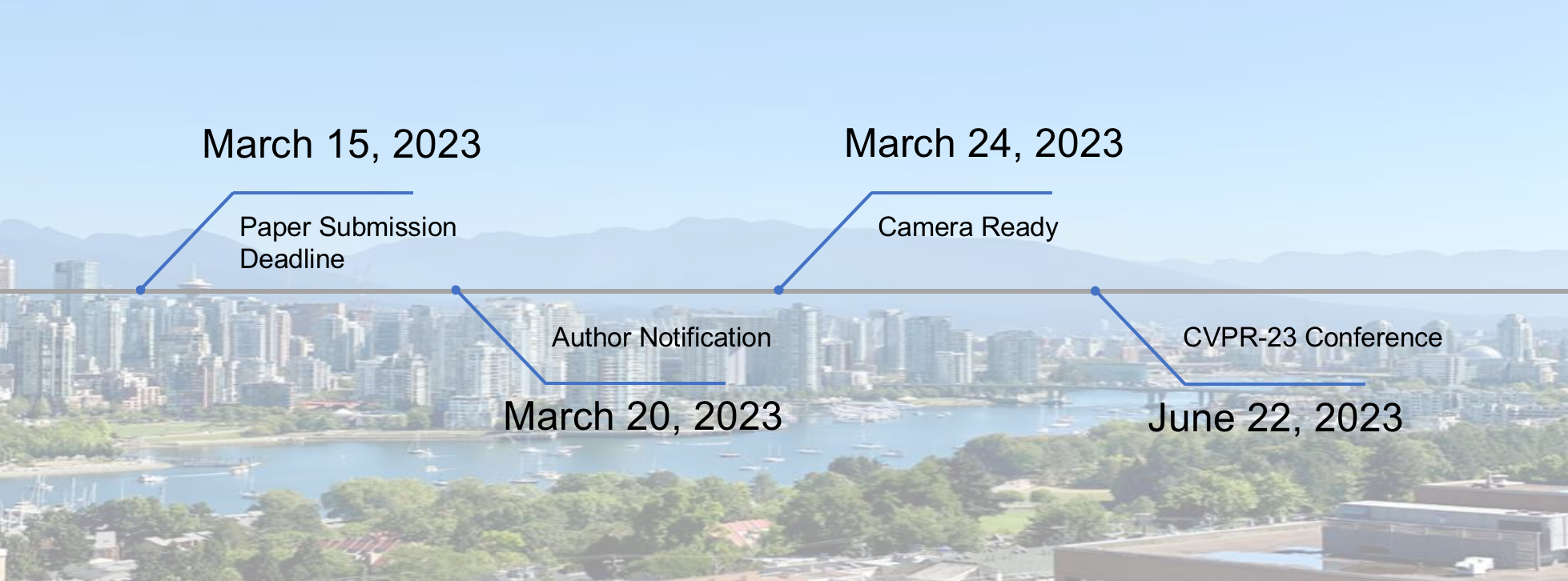

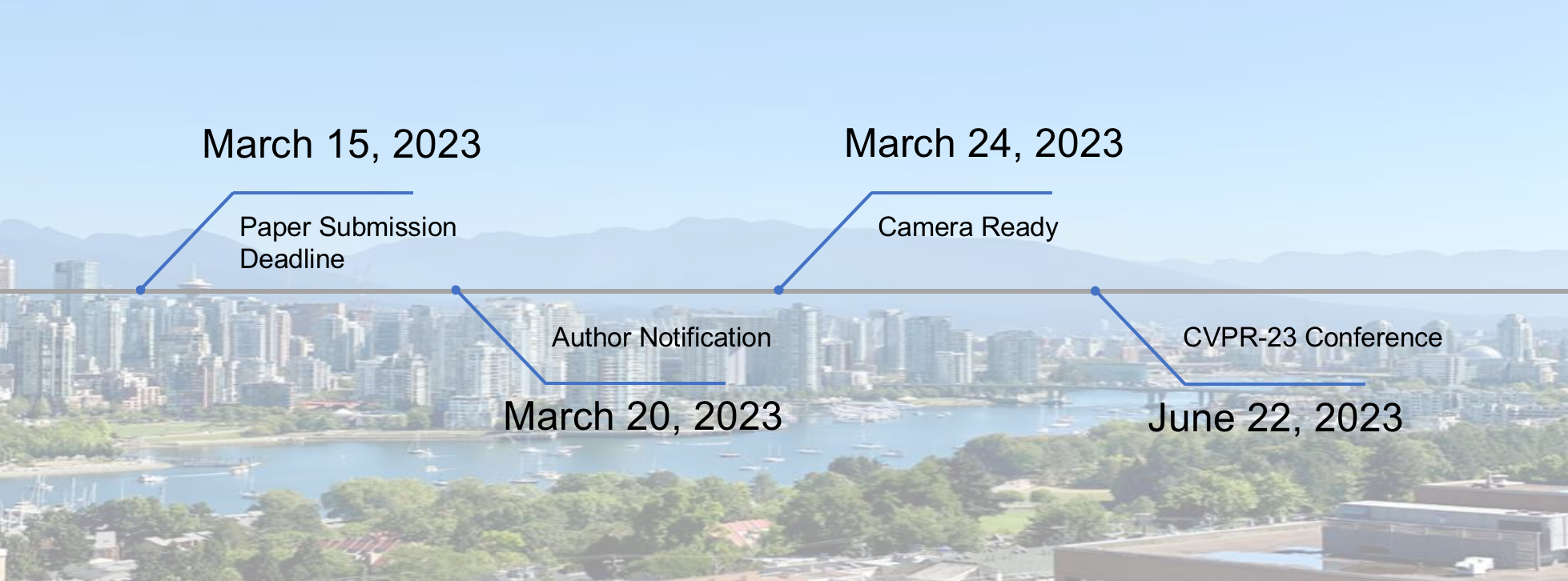

Tentative Important Dates

Deep learning has achieved significant success in multiple fields, including computer vision. However, studies in adversarial machine learning also indicate that deep learning models are highly vulnerable to adversarial examples. Extensive works have demonstrated that adversarial examples challenge the robustness of deep neural networks, which threatens deep-learning-based applications in both the digital and physical worlds.

Though harmful, adversarial attacks are also beneficial for deep learning models. Discovering and harnessing adversarial examples properly could be highly beneficial across several domains including improving model robustness, diagnosing model blind spots, protecting data privacy, safety evaluation, and further understanding vision systems in practice. Since there are both the devil and angel roles of adversarial learning, exploring robustness is an art of balancing and embracing both the light and dark sides of adversarial examples.

In this workshop, we aim to bring together researchers from the fields of computer vision, machine learning, and security to jointly cooperate with a series of meaningful works, lectures, and discussions. We will focus on the most recent progress and also the future directions of both the positive and negative aspects of adversarial machine learning, especially in computer vision. Different from the previous workshops on adversarial machine learning, our proposed workshop aims to explore both the devil and angel characters for building trustworthy deep learning models.

Overall, this workshop consists of invited talks from experts in this area, research paper submissions, and a large-scale online competition on building robust models.

| ArtofRobust Workshop Schedule | |||

| Event | Start time | End time | |

| Opening Remarks | 9:00 | 9:10 | |

| Invited talk: Aleksander Madry | 9:10 | 9:40 | |

| Invited talk: Deqing Sun | 9:40 | 10:10 | |

| Invited talk: Bo Li | 10:10 | 10:40 | |

| Invited talk: Cihang Xie | 10:40 | 11:10 | |

| Invited talk: Alan Yuille | 11:10 | 11:40 | |

| Oral Session | 11:40 | 12:00 | |

| Challenge Session | 12:00 | 12:10 | |

| Poster Session | 12:10 | 12:30 | |

| Lunch (12:30-13:30) | |||

| Invited talk: Lingjuan Lyu | 13:30 | 14:00 | |

| Invited talk: Judy Hoffman | 14:00 | 14:30 | |

| Invited talk: Furong Huang | 14:30 | 15:00 | |

| Invited talk: Ludwig Schmidt | 15:00 | 15:30 | |

| Invited talk: Chaowei Xiao | 15:30 | 16:00 | |

| Poster Session | 16:00 | 17:00 | |

|

Alan

|

|

|

|

Furong

|

|

University of Maryland |

|

Bo

|

|

University of Illinois at Urbana-Champaign |

|

Cihang

|

|

UC Santa Cruz |

|

Deqing

|

|

|

|

Chaowei

|

|

Arizona State University |

|

Aleksander

|

|

Massachusetts Institute of Technology |

|

Judy

|

|

Georgia Tech |

|

Ludwig

|

|

University of Washington |

|

Lingjuan

|

|

Sony AI |

|

Aishan

|

|

Beihang |

|

Jiakai

|

|

Zhongguancun |

|

Francesco Croce |

|

University of Tübingen |

|

Vikash Sehwag |

|

Princeton University |

|

Yingwei

|

|

Waymo |

|

Xinyun

|

|

Google Brain |

|

Cihang

|

|

UC Santa Cruz |

|

Yuanfang

|

|

Beihang |

|

Xianglong

|

|

Beihang |

|

Xiaochun

|

|

Sun Yat-sen University |

|

Dawn

|

|

UC Berkeley |

|

Alan

|

|

Johns Hopkins |

|

Philip

|

|

Oxford |

|

Dacheng

|

|

JD Explore Academy |

Timeline

| Challenge Timeline | |

| 2023-03-28 (UTC+8) | Registration opens |

| 2023-03-31 10:00 (UTC+8) | Phase Ⅰ submission starts |

| 2023-04-28 20:00 (UTC+8) | Registration and Phase Ⅰ submission deadline |

| 2023-05-01 10:00 (UTC+8) | Phase Ⅱ submission starts |

| 2023-05-31 20:00 (UTC+8) | Phase Ⅱ submission deadline |

| 2023-06 | Results Announcement |

Award List

| Rank | Team | Price |

| Huge | ¥20000 | |

| violet | ¥15000 | |

| team_wwwwww | ¥10000 | |

| 4 | team_AIHIA | ¥9000 |

| 5 | hhh | ¥6000 |

| 6 | SJTU_ICL | ¥4000 |

| 7 | team_oldman | ¥1500 |

| 7 | puff | ¥1500 |

| 9 | 为了全人类 | ¥1500 |

| 10 | superKI | ¥1500 |

Challenge Chair

|

Zonglei

|

|

Beihang |

|

Haotong

|

|

Beihang |

|

Siyuan

|

|

Chinese Academy |

|

Ding

|

|

SenseTime |

|

Yichao

|

|

SenseTime |

|

Yue

|

|

NUDT & OPENI |

|

Xianglong

|

|

Beihang |

|

Simin

|

|

Beihang |

|

Shunchang

|

|

Beihang |

|

Yisong

|

|

Beihang |